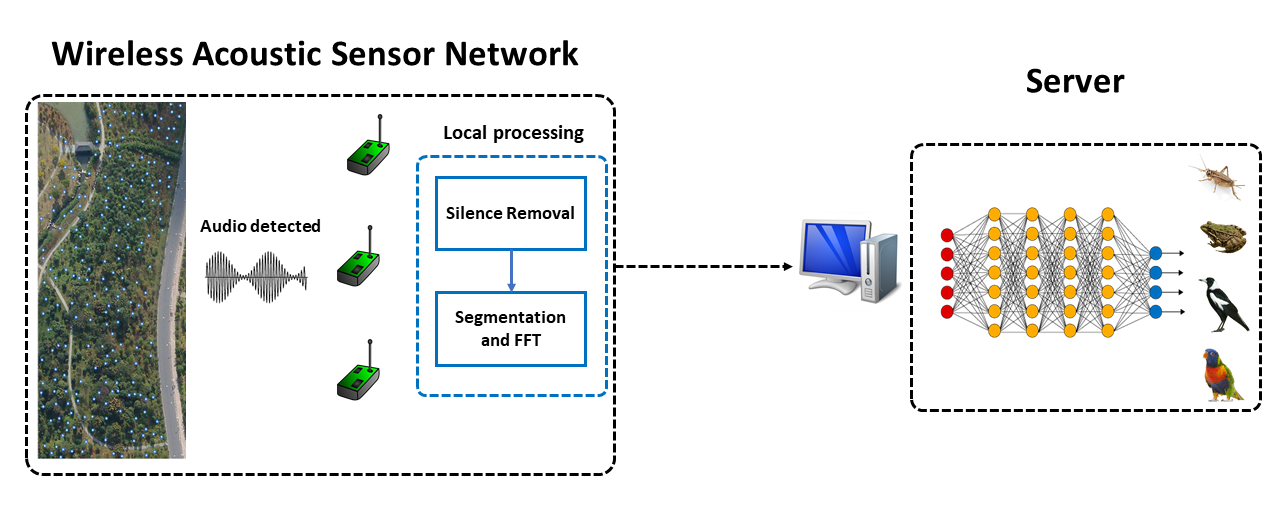

Acoustic Wireless Sensor Network

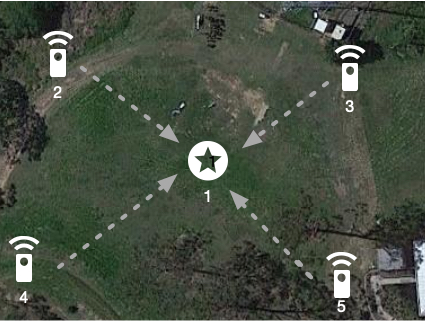

Automatic identification of animal species by their vocalization is an important and challenging task. Although many kinds of audio monitoring system have been proposed in the literature, they suffer from several disadvantages such as non-trivial feature selection, accuracy degradation because of environmental noise or intensive local computation. In this paper, we propose a deep learning based acoustic classification framework for Wireless Acoustic Sensor Network (WASN). The proposed framework is based on cloud architecture which relaxes the computational burden on the wireless sensor node. To improve the recognition accuracy, we design a multi-view Convolution Neural Network (CNN) to extract the short-, middle-, and long-term dependencies in parallel. The evaluation on two real datasets shows that the proposed architecture can achieve high accuracy and outperforms traditional classification systems significantly when the environmental noise dominate the audio signal (low SNR). Moreover, we implement and deploy the proposed system on a testbed and analyse the system performance in real-world environments. Both simulation and real-world evaluation demonstrate the accuracy and robustness of the proposed acoustic classification system in distinguishing species of animals.